AI-Powered Moderation: Nonlinear Growth of Data Quantity and Quality

By Fastuna

- article

- Conversational AI

- Artificial Intelligence

- Conversational Surveys

- Chatbots

- Generative AI

At first glance, AI-powered moderation – AKA conversational research powered by AI – may seem similar to online surveys. However, there are game-changing features that are revolutionising the process of collecting insights.

In this article, we share the findings of our internal pilots carried out during the development and testing of our new flagship research solution, Fastuna AI, with our clients.

AI-powered moderation provides more meaningful data compared to open-ended questions

As researchers, we often want to include open-ended questions in quantitative studies, such as U&A. However, based on our experience we observed the maximum number of questions per survey is about three. Therefore, we didn’t feel comfortable automating a qualitative study by adding a series of open-ended questions. The quality of verbatims just wasn’t satisfactory. This has changed substantially this year.

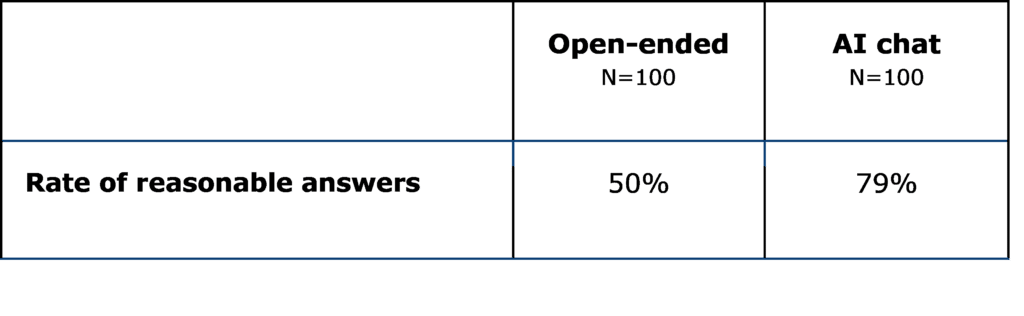

The AI-powered moderator performs such tasks way better. We compared the responses to open-ended questions from the quantitative study with those given to our conversational agent. The results were pleasantly surprising.

In a standard quantitative questionnaire, to the open-ended question “What was the overall expression of the product idea?” on average we receive a 22-character-long answer.

Our AI-powered moderator asks three questions instead of one. The respondent sees the original question followed by two “probing” questions. The content of these probing questions depends on the respondent’s answers. Our pilots indicate that the quality of response with probing increases non-linearly. Respondents tend to write in not just three but seven (!) times more characters – 151 – on average.

More insights

We compared the verbatims collected from quantitative surveys and conversational research. Our findings show the responses are not only more granular but also provide more valuable insights, such as usage occasions, problems, and needs.

This increment is crucial, particularly in a highly competitive market. At the advanced stage of product development, brands can only compete by identifying those latent insights to differentiate themselves.

Honest responses on delicate subjects

Our auto-moderator has successfully established trust with the respondents and created a safe space for open and honest communication. The perception of safety stems from the belief that a robot is objective and impartially navigates conversations. Participants appreciate the non-judgmental nature of the auto-moderator, which contributes to their willingness to share personal experiences and insights.

In this safe environment, respondents delve into profoundly personal subjects, addressing sensitive health-related topics with a level of transparency that may be challenging in traditional human-led interactions. Our auto-moderator also facilitates discussions on intimate topics, such as sexual behaviour and personal hygiene-related issues.

Imitating human behaviour for effective responses

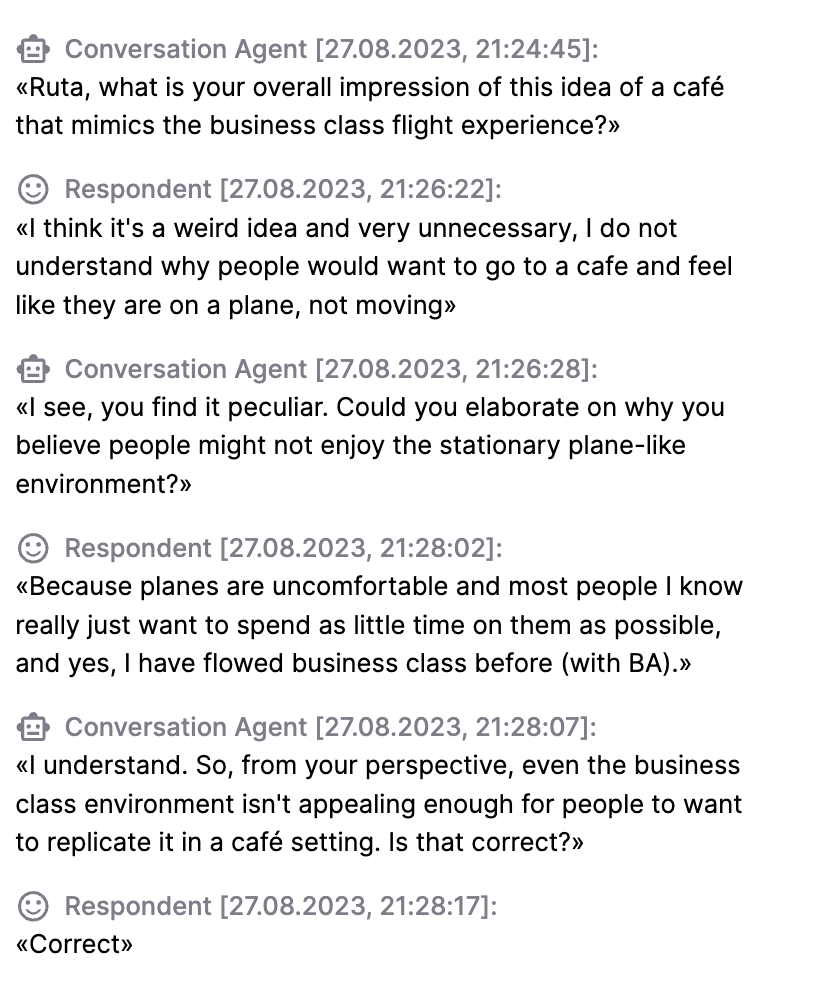

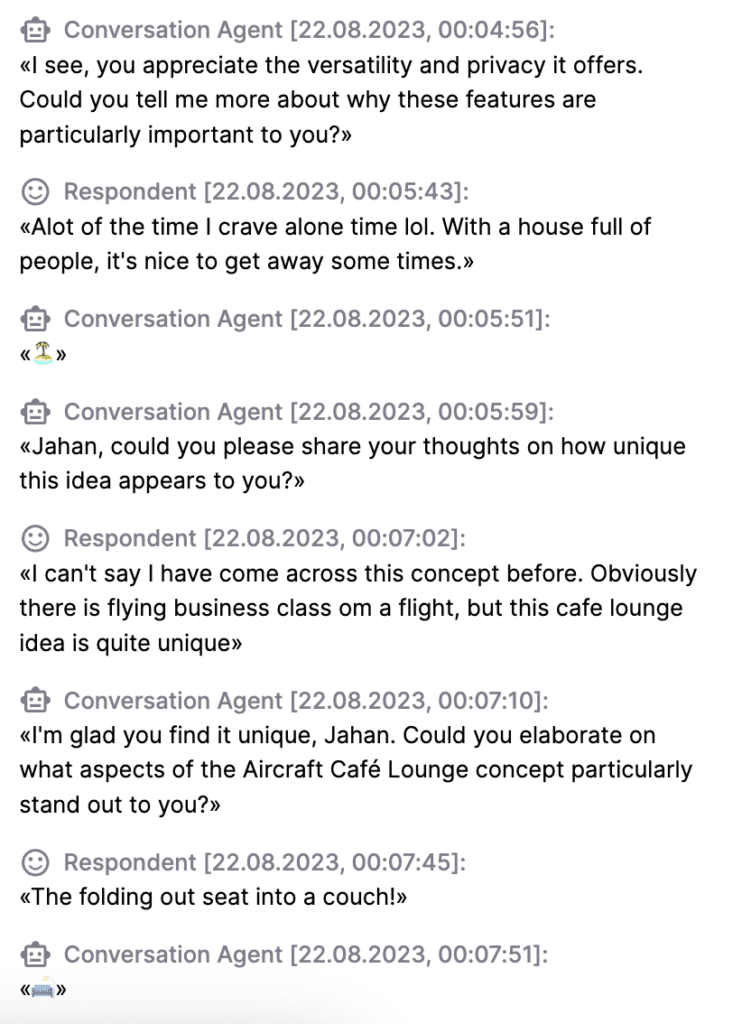

What makes our AI moderator so unique is the ability to help collect more meaningful data. The respondents are aware that they are conversing with a machine, but the way the chat works is quite similar to a usual human conversation.

We’ve taught Mia (yes, our conversational agent has a name!) to engage with respondents, showing her interest in their answers. She responds with emojis, shows her appreciation for detailed answers, and tries to clarify any points made by the respondent. Mia loves to communicate and ensures that people feel heard and valued.

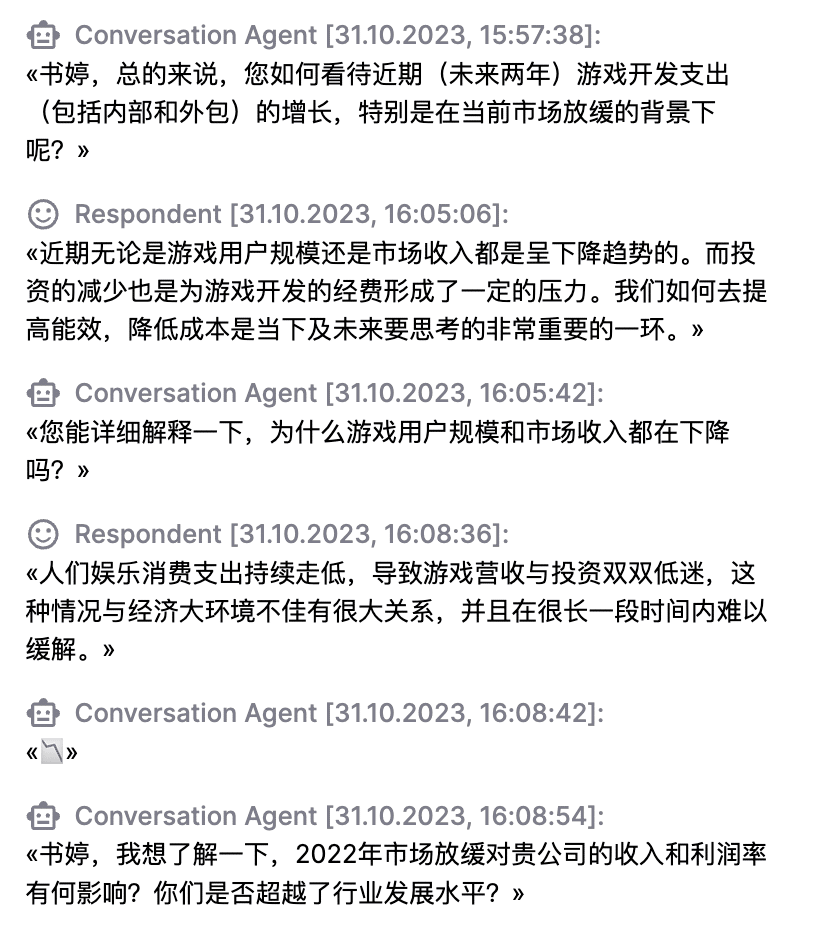

Any language – natural or professional

AI moderator can speak many languages. The agent instantly translates the guide and asks questions in the respondent’s language. It allows managers to collect consumer feedback globally while sitting in their comfy headquarters.

Conversational AI encompasses data from diverse professional fields, enabling it to comprehend complex terminology. It is essential to build rapport between an AI moderator and a respondent, leading to better responses. For managers, the advantage is clear – ease of setting up and running such studies (good luck finding a qual expert who will be willing to talk to an IT architect or a professional gamer) and the ability to scale.

Our three-step data-checking approach for AI-powered moderation

Still, as we are recruiting real humans for our studies, the problem of fraudsters may occur. Some of them are not in the mood to give proper answers about the topic, while others want to check the limits of a moderator.

The number of valid interviews in a conversational study is more than 95% (the remaining 5% are manually culled). We detect fraudulent interviews to eliminate them from analysis using the comprehensive approach, which includes the following steps:

- Analyses the response for meaningfulness. Allows us to identify outright nonsense or gibberish, such as “qweroijwoirgjn” or “idk”

- Detection of threatening, hate speech, harassment, sexual excitement, or violence. Interviews with such content are sent to a special status for manual cross-checking before they can be included in the analysis.

- Moderation of the dialogue development scenario according to the situation. Before generating the next message, the agent tries to understand if there is anything unusual in the respondent’s answer to care about, such as a counter-question, a negative reaction or a refusal to answer. It could also be a sarcastic note, a joke or even flirting with the agent that requires extra attention.

This sophisticated conversation management logic allows us to enrich the content of the response and minimise the risk of collecting unqualified data.